"We want to use LLMs on enterprise data, but sensitive fields block us."

LLM Capsule

removes the blocker

Available on AWS Marketplace. GS Certified

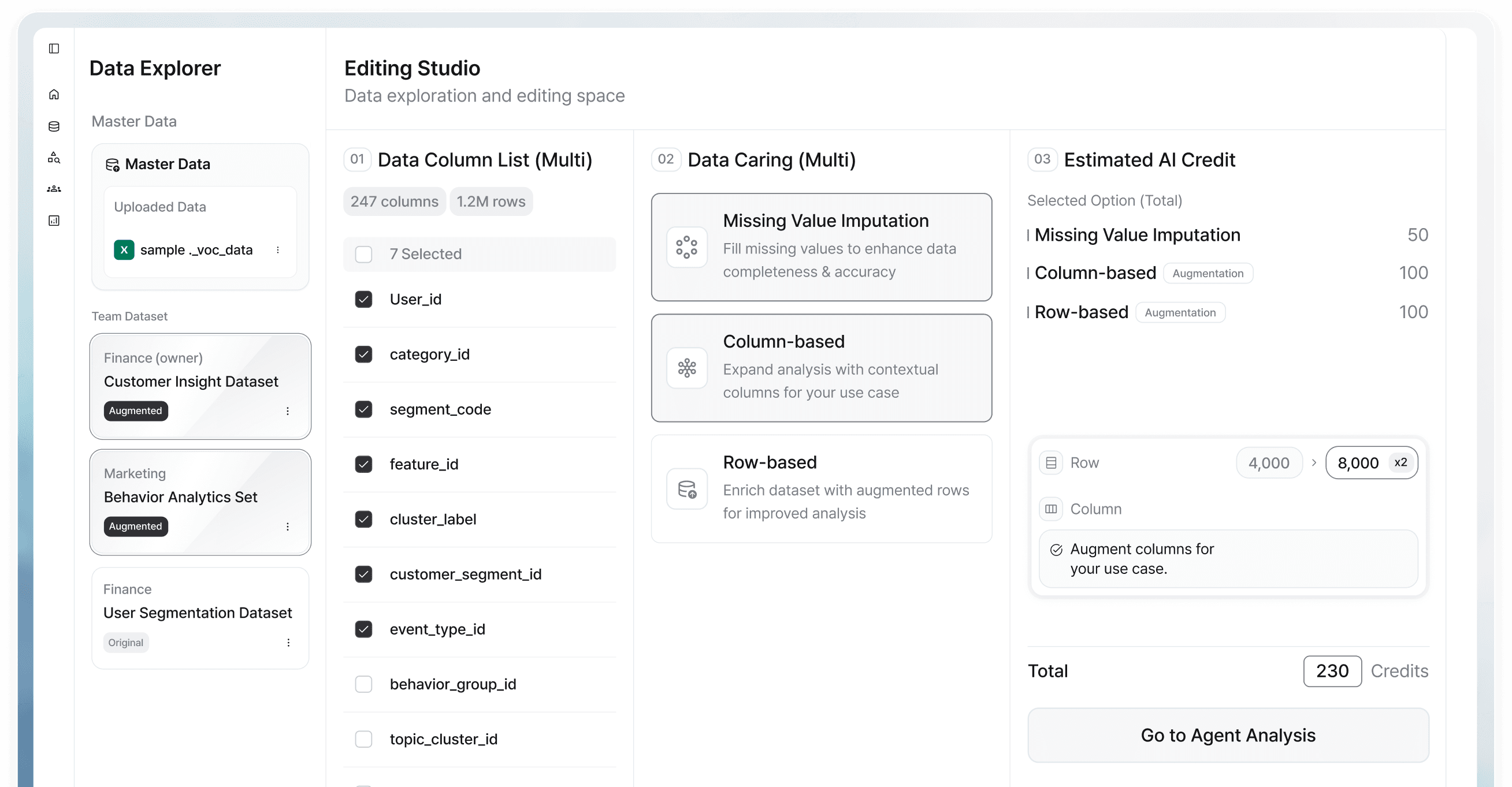

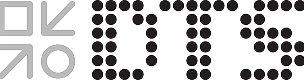

Explore LLM Capsule →Enterprise AI fails before production due to restricted, unusable, and unstable data. CUBIG transforms raw data into reproducible, AI-ready states.

At CUBIG, AI-Ready Data means data that is usable, accessible

without regulatory barriers, context-preserving, and fully traceable when things break.

Repair, rebalance, and safely augment data into a synthetic-first layer that AI can actually run on.

It fails after deployment because data is restricted, unusable, or execution becomes unstable in production.

60%

of AI projects will fail by 2026 without AI-optimized data infrastructure

30%

of GenAI projects abandoned after PoC — before reaching production

42%

of US enterprises halted most AI initiatives — up from 17% the prior year

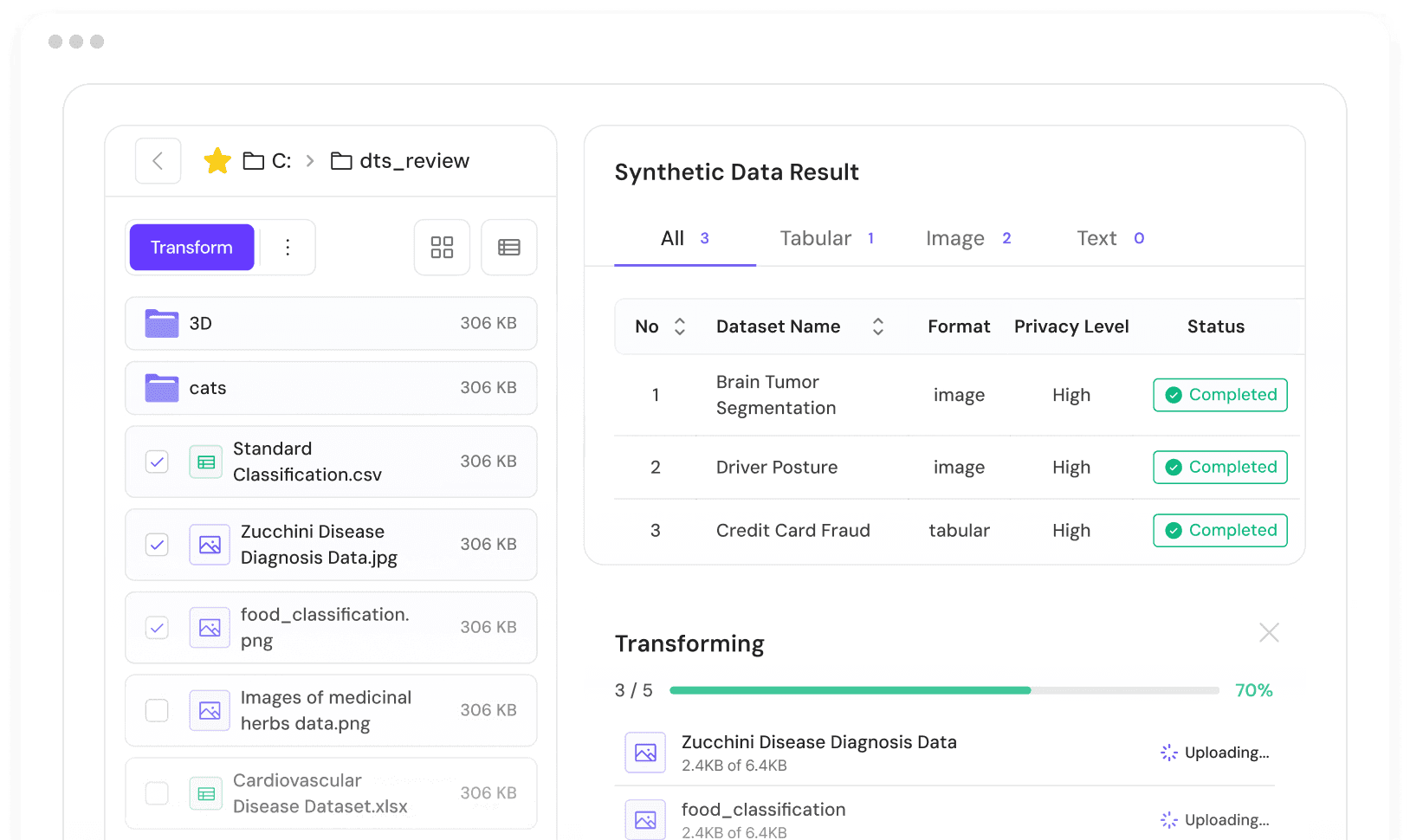

When AI systems fail in production, the cost is rarely the model itself. Teams spend days or weeks isolating the cause — checking models, retraining pipelines, or rebuilding datasets — without visibility into what execution conditions actually changed. Without execution traceability, debugging becomes guesswork.

These incidents slow AI deployment cycles and reduce organizational trust in production systems. Projects that worked correctly in development get deprioritized — not because the model was wrong, but because no one can reliably explain why the results changed after deployment.

Sensitive information is protected while data remains useful and compliant for AI workflows.

Data can actually be used for training, validation, and decisions — not just stored or partially accessible.

AI runs stay reproducible even as environments, schemas, and pipelines change over time.

Infrastructure that makes enterprise data usable, privacy-safe, and stable for production AI execution.

At CUBIG, AI-Ready Data means data that is usable, accessible

without regulatory barriers, context-preserving, and fully traceable when things break.

structured & unstructured

Fixes unusable data · data-level privacy

fixes unstable execution

fixes inference-level privacy

Stable production models · rare-event coverage

Privacy-safe insights · churn prediction

Survey · price strategy · instant research

What-if scenarios · regulatory impact

LLM on internal data · RAG · PII-safe

Enterprise RAG · secure knowledge base

Schema fingerprinting · version-locked runs

ISO 27001 · ISO 42001 · GS Certified · AWS Marketplace · 10+ Patents

Enterprise AI failures are not random. They trace back to one of three structural blockers. Find yours.

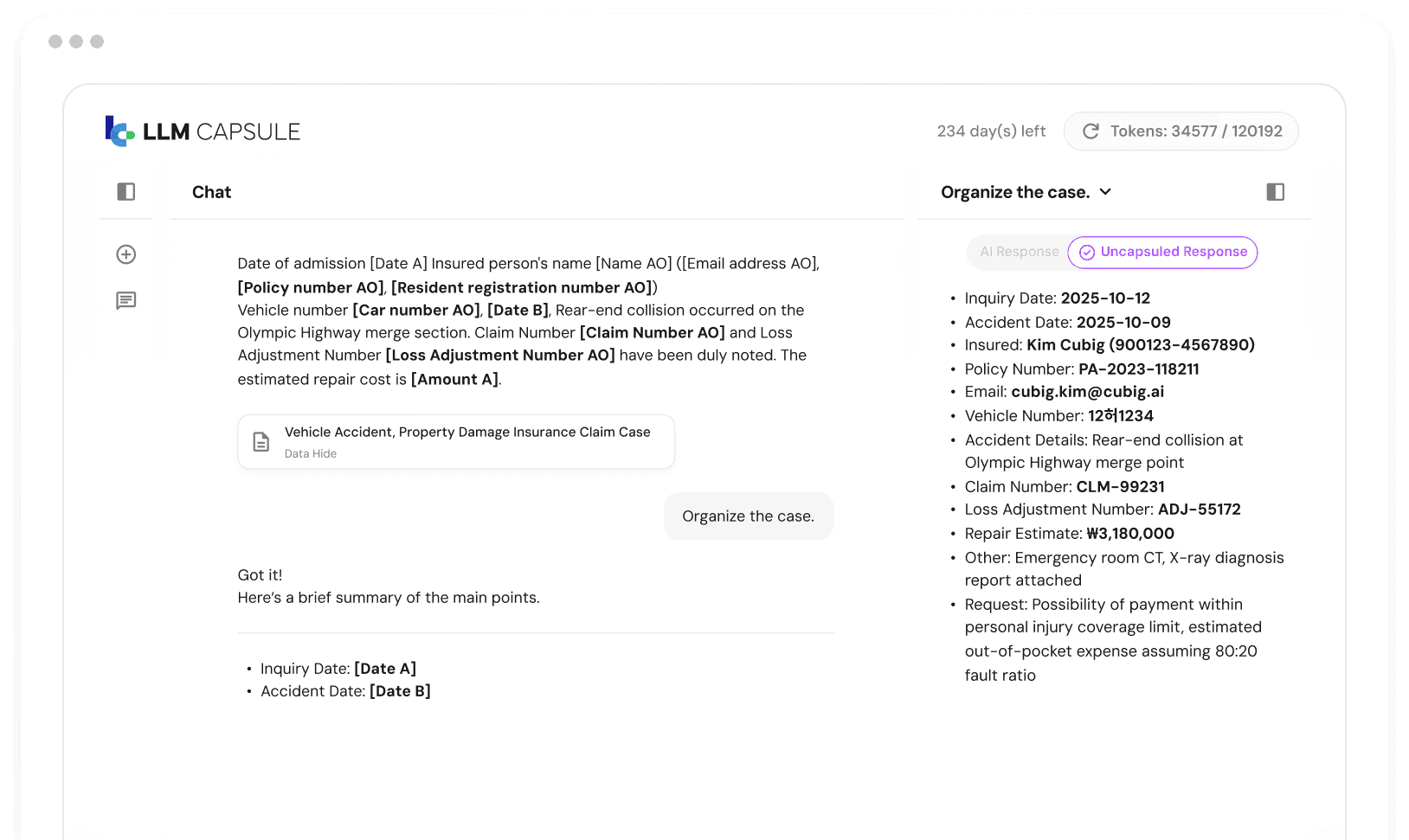

"We want to use LLMs on enterprise data, but sensitive fields block us."

removes the blocker

Available on AWS Marketplace. GS Certified

Explore LLM Capsule →"We have data but it's restricted, imbalanced, or too incomplete to train on."

"AI worked in PoC but fails or produces inconsistent results in production."

stabilizes execution state

Core platform. Try at syntitan.ai

Explore SynTitan →PII, internal identifiers, regulated records — employees can't send this to an LLM. Compliance blocks adoption. Projects stall.

Access controls, coverage gaps, rare classes, privacy constraints — the data exists but AI can't use it. Projects can't start.

Schema changes, pipeline updates, silent data drift — execution state is never fixed. Results change after deployment. Root cause takes weeks to find.

Databricks, Snowflake, dbt solve storage, query, and pipeline.

They do not solve AI execution stability, sensitive data blockers, or unusable training data.

That is a different layer. That is what CUBIG builds.

Three distinct entry points. Each maps to a specific production blocker.

Teams usually come in through one – and then expand from there.

Teams adopting external LLM APIs often discover that enterprise documents contain sensitive fields that cannot be safely transmitted.

Compliance blocks adoption. Projects stall at the data access layer.

LLM Capsule removes that blocker by anonymizing sensitive data inline during LLM interaction – PII never reaches the external model.

Teams building classification or detection models often find that rare classes are underrepresented, privacy rules prevent using original data, or access restrictions block the pipeline entirely. The data exists — it just cannot be used.

DTS generates privacy-safe synthetic datasets to expand coverage and fix imbalance when real data is restricted or incomplete.

Teams operating production AI pipelines encounter results that change without model updates — schema drift, pipeline changes, runtime variance. Debugging takes weeks because there is no traceability layer to isolate which execution condition changed.

SynTitan binds every AI run to a versioned Release State — making execution conditions traceable, comparable, and reproducible on demand.

These are real deployment patterns across actual enterprise environments. Each reflects a specific production blocker removed by CUBIG infrastructure.

Improved anomaly detection reliability and expanded validation coverage for rare event classes.

Customer insights generated and analytics pipelines unblocked — without exposing personal data at any stage.

Complaint classification accuracy improved. Cross-team analysis enabled without exposing original customer records.

Early detection of opinion leader influence and policy sentiment shifts — before they escalate to public-facing issues.

Trend insights delivered significantly faster — without collecting personal data from survey respondents.

Clear behavioral insights and community sentiment trends — integrated from fragmented data sources into reliable production pipelines.

Production AI failures cost weeks of incident recovery time.

The root cause is almost always data or execution state — not the model.

Let's find yours in 30 minutes.

30-min architecture review · no commitment · engineers-first conversation

Access control, audit logging, and separation of duties built into the operational workflow.

Full traceability of data lineage, transformations, and AI execution states across environments.

Designed to operate within regulated industries. Enterprise-grade data handling principles throughout.

Available via enterprise marketplace channels. Procurement processes supported from first contact.

On-premises, cloud, or enterprise marketplace deployment. Flexible to fit your existing infrastructure and security posture.

Raw data boundary enforcement, data minimization, and policy-based handling across all workflows.

We're not a PoC vendor — we're the production AI infrastructure layer enterprises were missing.

We're not a PoC vendor — we're the production AI infrastructure layer enterprises were missing.

Awards & certifications incl. 4 Ministerial Prizes, GS & KISA

Patents (8 domestic incl. 3 registered, 2 overseas)

Founded · Seongnam-si, Korea · UK entity established

Unlock the full power of synthetic data with CUBIG — generate, integrate, validate, and scale multimodal data across industries — without ever exposing the original.

Contact CUBIG →